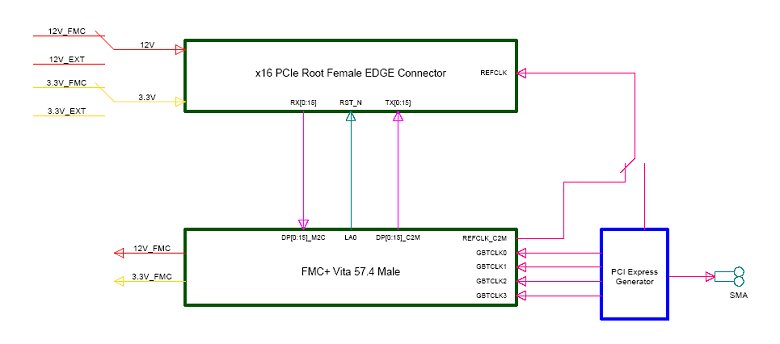

For technologies such as GPU Direct, this means rapid RDMA transfers between the GPUs and the network (which can significantly improve performance). Having one additional x8 slot on each CPU allows for the accelerators to communication directly with storage or high-speed networks without leaving the PCI-E root complex. Although these slots are not compatible with accelerator cards, they are excellent for networking and/or storage cards. The remaining 8 lanes of PCI-E from each CPU (along with 4 lanes from the Southbridge chipset) provide connections for the remaining PCI-E slots. PCI-Express switches (the purple boxes labeled PEX8747) further expand each CPU’s tree out to four x16 PCI-Express gen 3.0 slots.Each CPU provides 32 lanes of PCI-Express generation 3.0 (split as two x16 connections).Two CPUs are shown in blue at the bottom of the diagram.It’s a bit difficult to parse, but the important points are: To illustrate, let’s look at the PCI-Express design of Microway’s latest 8-GPU Octoputer server: We dive deeply into these issues in our post about Common PCI-Express Myths.

Servers and workstations with multiple processors have multiple PCI-Express root complexes. Additionally, certain high-performance features – such as NVIDIA’s GPU Direct technology – require that all components be attached to the same PCI-Express root complex. Unfortunately, it’s not so simple, because each CPU only has a certain amount of bandwidth available.

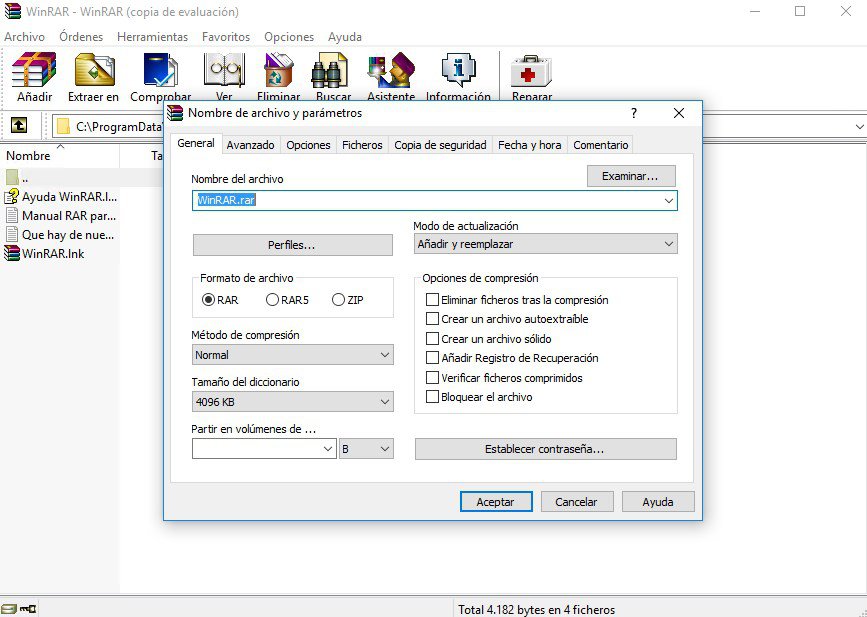

It is tempting to just look at the number of PCI-Express slots in the systems you’re evaluating and assume they’re all the same.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed